Machine studying (ML) is revolutionizing options throughout industries and driving new types of insights and intelligence from knowledge. Many ML algorithms practice over massive datasets, generalizing patterns it finds within the knowledge and inferring outcomes from these patterns as new unseen information are processed. Often, if the dataset or mannequin is just too massive to be skilled on a single occasion, distributed coaching permits for a number of cases inside a cluster for use and distribute both knowledge or mannequin partitions throughout these cases through the coaching course of. Native help for distributed coaching is obtainable by means of the Amazon SageMaker SDK, together with instance notebooks in in style frameworks.

Nevertheless, typically attributable to safety and privateness rules inside or throughout organizations, the info is decentralized throughout a number of accounts or in several Areas and it may well’t be centralized into one account or throughout Areas. On this case, federated studying (FL) needs to be thought-about to get a generalized mannequin on the entire knowledge.

On this put up, we talk about how one can implement federated studying on Amazon SageMaker to run ML with decentralized coaching knowledge.

What’s federated studying?

Federated studying is an ML strategy that permits for a number of separate coaching classes working in parallel to run throughout massive boundaries, for instance geographically, and mixture the outcomes to construct a generalized mannequin (international mannequin) within the course of. Extra particularly, every coaching session makes use of its personal dataset and will get its personal native mannequin. Native fashions in several coaching classes might be aggregated (for instance, mannequin weight aggregation) into a world mannequin through the coaching course of. This strategy stands in distinction to centralized ML strategies the place datasets are merged for one coaching session.

Federated studying vs. distributed coaching on the cloud

When these two approaches are working on the cloud, distributed coaching occurs in a single Area on one account, and coaching knowledge begins with a centralized coaching session or job. Throughout distributed coaching course of, the dataset will get break up into smaller subsets and, relying on the technique (knowledge parallelism or mannequin parallelism), subsets are despatched to completely different coaching nodes or undergo nodes in a coaching cluster, which suggests particular person knowledge doesn’t essentially keep in a single node of the cluster.

In distinction, with federated studying, coaching normally happens in a number of separate accounts or throughout Areas. Every account or Area has its personal coaching cases. The coaching knowledge is decentralized throughout accounts or Areas from the start to the tip, and particular person knowledge is just learn by its respective coaching session or job between completely different accounts or Areas through the federated studying course of.

Flower federated studying framework

A number of open-source frameworks can be found for federated studying, akin to FATE, Flower, PySyft, OpenFL, FedML, NVFlare, and Tensorflow Federated. When selecting an FL framework, we normally think about its help for mannequin class, ML framework, and gadget or operation system. We additionally want to think about the FL framework’s extensibility and bundle measurement in order to run it on the cloud effectively. On this put up, we select an simply extensible, customizable, and light-weight framework, Flower, to do the FL implementation utilizing SageMaker.

Flower is a complete FL framework that distinguishes itself from present frameworks by providing new amenities to run large-scale FL experiments, and permits richly heterogeneous FL gadget eventualities. FL solves challenges associated to knowledge privateness and scalability in eventualities the place sharing knowledge will not be potential.

Design rules and implementation of Flower FL

Flower FL is language-agnostic and ML framework-agnostic by design, is totally extensible, and might incorporate rising algorithms, coaching methods, and communication protocols. Flower is open-sourced underneath Apache 2.0 License.

The conceptual structure of the FL implementation is described within the paper Flower: A pleasant Federated Studying Framework and is highlighted within the following determine.

On this structure, edge purchasers stay on actual edge units and talk with the server over RPC. Digital purchasers, alternatively, eat near zero assets when inactive and solely load mannequin and knowledge into reminiscence when the shopper is being chosen for coaching or analysis.

The Flower server builds the technique and configurations to be despatched to the Flower purchasers. It serializes these configuration dictionaries (or config dict for brief) to their ProtoBuf illustration, transports them to the shopper utilizing gRPC, after which deserializes them again to Python dictionaries.

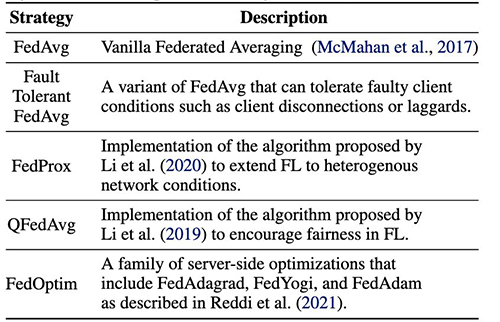

Flower FL methods

Flower permits customization of the training course of by means of the technique abstraction. The technique defines the whole federation course of specifying parameter initialization (whether or not it’s server or shopper initialized), the minimal variety of purchasers obtainable required to initialize a run, the load of the shopper’s contributions, and coaching and analysis particulars.

Flower has an intensive implementation of FL averaging algorithms and a sturdy communication stack. For an inventory of averaging algorithms carried out and related analysis papers, confer with the next desk, from Flower: A pleasant Federated Studying Framework.

Federated studying with SageMaker: Resolution structure

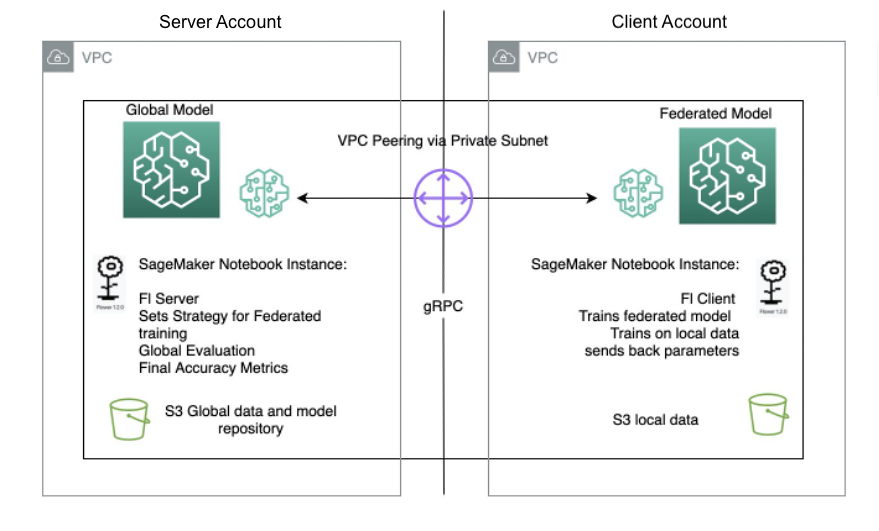

A federated studying structure utilizing SageMaker with the Flower framework is carried out on prime of bi-directional gRPC (basis) streams. gRPC defines the forms of messages exchanged and makes use of compilers to then generate environment friendly implementation for Python, however it may well additionally generate the implementation for different languages, akin to Java or C++.

The Flower purchasers obtain directions (messages) as uncooked byte arrays by way of the community. Then the purchasers deserialize and run the instruction (coaching on native knowledge). The outcomes (mannequin parameters and weights) are then serialized and communicated again to the server.

The server/shopper structure for Flower FL is outlined in SageMaker utilizing pocket book cases in several accounts in the identical Area because the Flower server and Flower shopper. The coaching and analysis methods are outlined on the server in addition to the worldwide parameters, then the configuration is serialized and despatched to the shopper over VPC peering.

The pocket book occasion shopper begins a SageMaker coaching job that runs a customized script to set off the instantiation of the Flower shopper, which deserializes and reads the server configuration, triggers the coaching job, and sends the parameters response.

The final step happens on the server when the analysis of the newly aggregated parameters is triggered upon completion of the variety of runs and purchasers stipulated on the server technique. The analysis takes place on a testing dataset present solely on the server, and the brand new improved accuracy metrics are produced.

The next diagram illustrates the structure of the FL setup on SageMaker with the Flower bundle.

Implement federated studying utilizing SageMaker

SageMaker is a totally managed ML service. With SageMaker, knowledge scientists and builders can shortly construct and practice ML fashions, after which deploy them right into a production-ready hosted surroundings.

On this put up, we show how one can use the managed ML platform to offer a pocket book expertise surroundings and carry out federated studying throughout AWS accounts, utilizing SageMaker coaching jobs. The uncooked coaching knowledge by no means leaves the account that owns the info and solely the derived weights are despatched throughout the peered connection.

We spotlight the next core parts on this put up:

- Networking – SageMaker permits for fast setup of default networking configuration whereas additionally permitting you to completely customise the networking relying in your group’s necessities. We use a VPC peering configuration throughout the Area on this instance.

- Cross-account entry settings – So as to permit a consumer within the server account to begin a mannequin coaching job within the shopper account, we delegate entry throughout accounts utilizing AWS Id and Entry Administration (IAM) roles. This manner, a consumer within the server account doesn’t must signal out of the account and check in to the shopper account to carry out actions on SageMaker. This setting is just for functions of beginning SageMaker coaching jobs, and it doesn’t have any cross-account knowledge entry permission or sharing.

- Implementing federated studying shopper code within the shopper account and server code within the server account – We implement federated studying shopper code within the shopper account by utilizing the Flower bundle and SageMaker managed coaching. In the meantime, we implement server code within the server account by utilizing the Flower bundle.

Arrange VPC peering

A VPC peering connection is a networking connection between two VPCs that lets you route visitors between them utilizing personal IPv4 addresses or IPv6 addresses. Situations in both VPC can talk with one another as if they’re throughout the similar community.

To arrange a VPC peering connection, first create a request to see with one other VPC. You may request a VPC peering reference to one other VPC in the identical account, or in our use case, join with a VPC in a special AWS account. To activate the request, the proprietor of the VPC should settle for the request. For extra particulars about VPC peering, confer with Create a VPC peering connection.

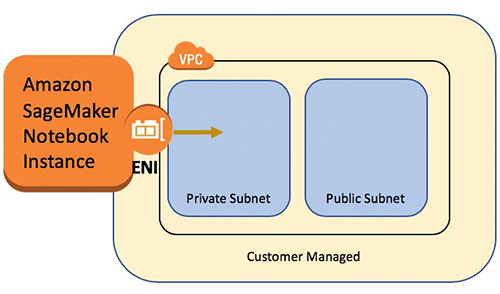

Launch SageMaker pocket book cases in VPCs

A SageMaker pocket book occasion offers a Jupyter pocket book app by means of a totally managed ML Amazon Elastic Compute Cloud (Amazon EC2) occasion. SageMaker Jupyter notebooks are used to carry out superior knowledge exploration, create coaching jobs, deploy fashions to SageMaker internet hosting, and check or validate your fashions.

The pocket book occasion has quite a lot of networking configurations obtainable to it. On this setup, now we have the pocket book occasion run inside a personal subnet of the VPC and don’t have direct web entry.

Configure cross-account entry settings

Cross-account entry settings embody two steps to delegate entry from the server account to shopper account by utilizing IAM roles:

- Create an IAM function within the shopper account.

- Grant entry to the function within the server account.

For detailed steps to arrange an identical situation, confer with Delegate entry throughout AWS accounts utilizing IAM roles.

Within the shopper account, we create an IAM function known as FL-kickoff-client-job with the coverage FL-sagemaker-actions connected to the function. The FL-sagemaker-actions coverage has JSON content material as follows:

We then modify the belief coverage within the belief relationships of the FL-kickoff-client-job function:

Within the server account, permissions are added to an present consumer (for instance, developer) to permit switching to the FL-kickoff-client-job function in shopper account. To do that, we create an inline coverage known as FL-allow-kickoff-client-job and fasten it to the consumer. The next is the coverage JSON content material:

Pattern dataset and knowledge preparation

On this put up, we use a curated dataset for fraud detection in Medicare suppliers’ knowledge launched by the Facilities for Medicare & Medicaid Companies (CMS). Knowledge is break up right into a coaching dataset and a testing dataset. As a result of the vast majority of the info is non-fraud, we apply SMOTE to stability the coaching dataset, and additional break up the coaching dataset into coaching and validation elements. Each the coaching and validation knowledge are uploaded to an Amazon Easy Storage Service (Amazon S3) bucket for mannequin coaching within the shopper account, and the testing dataset is used within the server account for testing functions solely. Particulars of the info preparation code are within the following pocket book.

With the SageMaker pre-built Docker pictures for the scikit-learn framework and SageMaker managed coaching course of, we practice a logistic regression mannequin on this dataset utilizing federated studying.

Implement a federated studying shopper within the shopper account

Within the shopper account’s SageMaker pocket book occasion, we put together a shopper.py script and a utils.py script. The shopper.py file incorporates code for the shopper, and the utils.py file incorporates code for a number of the utility capabilities that might be wanted for our coaching. We use the scikit-learn bundle to construct the logistic regression mannequin.

In shopper.py, we outline a Flower shopper. The shopper is derived from the category fl.shopper.NumPyClient. It must outline the next three strategies:

- get_parameters – It returns the present native mannequin parameters. The utility operate

get_model_parameterswill do that. - match – It defines the steps to coach the mannequin on the coaching knowledge in shopper’s account. It additionally receives international mannequin parameters and different configuration info from the server. We replace the native mannequin’s parameters utilizing the acquired international parameters and proceed coaching it on the dataset within the shopper account. This methodology additionally sends the native mannequin’s parameters after coaching, the scale of the coaching set, and a dictionary speaking arbitrary values again to the server.

- consider – It evaluates the supplied parameters utilizing the validation knowledge within the shopper account. It returns the loss along with different particulars akin to the scale of the validation set and accuracy again to the server.

The next is a code snippet for the Flower shopper definition:

We then use SageMaker script mode to organize the remainder of the shopper.py file. This consists of defining parameters that might be handed to SageMaker coaching, loading coaching and validation knowledge, initializing and coaching the mannequin on the shopper, organising the Flower shopper to speak with the server, and at last saving the skilled mannequin.

utils.py features a few utility capabilities which might be known as in shopper.py:

- get_model_parameters – It returns the scikit-learn LogisticRegression mannequin parameters.

- set_model_params – It units the mannequin’s parameters.

- set_initial_params – It initializes the parameters of the mannequin as zeros. That is required as a result of the server asks for preliminary mannequin parameters from the shopper at launch. Nevertheless, within the scikit-learn framework,

LogisticRegressionmannequin parameters usually are not initialized tillmannequin.match()is known as. - load_data – It hundreds the coaching and testing knowledge.

- save_model – It saves mannequin as a

.joblibfile.

As a result of Flower will not be a bundle put in within the SageMaker pre-built scikit-learn Docker container, we record flwr==1.3.0 in a necessities.txt file.

We put all three information (shopper.py, utils.py, and necessities.txt) underneath a folder and tar zip it. The .tar.gz file (named supply.tar.gz on this put up) is then uploaded to an S3 bucket within the shopper account.

Implement a federated studying server within the server account

Within the server account, we put together code on a Jupyter pocket book. This consists of two elements: the server first assumes a job to begin a coaching job within the shopper account, then the server federates the mannequin utilizing Flower.

Assume a job to run the coaching job within the shopper account

We use the Boto3 Python SDK to arrange an AWS Safety Token Service (AWS STS) shopper to imagine the FL-kickoff-client-job function and arrange a SageMaker shopper in order to run a coaching job within the shopper account by utilizing the SageMaker managed coaching course of:

Utilizing the assumed function, we create a SageMaker coaching job in shopper account. The coaching job makes use of the SageMaker built-in scikit-learn framework. Notice that every one S3 buckets and the SageMaker IAM function within the following code snippet are associated to the shopper account:

Mixture native fashions into a world mannequin utilizing Flower

We put together code to federate the mannequin on the server. This consists of defining the technique for federation and its initialization parameters. We use utility capabilities within the utils.py script described earlier to initialize and set mannequin parameters. Flower lets you outline your personal callback capabilities to customise an present technique. We use the FedAvg technique with customized callbacks for analysis and match configuration. See the next code:

The next two capabilities are talked about within the previous code snippet:

- fit_round – It’s used to ship the spherical quantity to the shopper. We go this callback because the

on_fit_config_fnparameter of the technique. We do that merely to show the usage of theon_fit_config_fnparameter. - get_evaluate_fn – It’s used for mannequin analysis on the server.

For demo functions, we use the testing dataset that we put aside in knowledge preparation to judge the mannequin federated from the shopper’s account and talk the consequence again to the shopper. Nevertheless, it’s price noting that in nearly all actual use circumstances, the info used within the server account will not be break up from the dataset used within the shopper account.

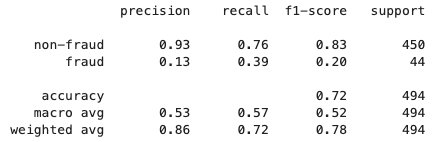

After the federated studying course of is completed, a mannequin.tar.gz file is saved by SageMaker as a mannequin artifact in an S3 bucket within the shopper account. In the meantime, a mannequin.joblib file is saved on the SageMaker pocket book occasion within the server account. Lastly, we use the testing dataset to check the ultimate mannequin (mannequin.joblib) on the server. Testing output of the ultimate mannequin is as follows:

Clear up

After you might be achieved, clear up the assets in each the server account and shopper account to keep away from extra costs:

- Cease the SageMaker pocket book cases.

- Delete VPC peering connections and corresponding VPCs.

- Empty and delete the S3 bucket you created for knowledge storage.

Conclusion

On this put up, we walked by means of how one can implement federated studying on SageMaker by utilizing the Flower bundle. We confirmed how one can configure VPC peering, arrange cross-account entry, and implement the FL shopper and server. This put up is beneficial for many who want to coach ML fashions on SageMaker utilizing decentralized knowledge throughout accounts with restricted knowledge sharing. As a result of the FL on this put up is carried out utilizing SageMaker, it’s price noting that much more options in SageMaker may be introduced into the method.

Implementing federated studying on SageMaker can benefit from all of the superior options that SageMaker offers by means of the ML lifecycle. There are different methods to attain or apply federated studying on the AWS Cloud, akin to utilizing EC2 cases or on the sting. For particulars about these various approaches, confer with Federated Studying on AWS with FedML and Making use of Federated Studying for ML on the Edge.

Concerning the authors

Sherry Ding is a senior AI/ML specialist options architect at Amazon Internet Companies (AWS). She has intensive expertise in machine studying with a PhD diploma in pc science. She primarily works with public sector clients on numerous AI/ML-related enterprise challenges, serving to them speed up their machine studying journey on the AWS Cloud. When not serving to clients, she enjoys out of doors actions.

Sherry Ding is a senior AI/ML specialist options architect at Amazon Internet Companies (AWS). She has intensive expertise in machine studying with a PhD diploma in pc science. She primarily works with public sector clients on numerous AI/ML-related enterprise challenges, serving to them speed up their machine studying journey on the AWS Cloud. When not serving to clients, she enjoys out of doors actions.

Lorea Arrizabalaga is a Options Architect aligned to the UK Public Sector, the place she helps clients design ML options with Amazon SageMaker. She can be a part of the Technical Subject Neighborhood devoted to {hardware} acceleration and helps with testing and benchmarking AWS Inferentia and AWS Trainium workloads.

Lorea Arrizabalaga is a Options Architect aligned to the UK Public Sector, the place she helps clients design ML options with Amazon SageMaker. She can be a part of the Technical Subject Neighborhood devoted to {hardware} acceleration and helps with testing and benchmarking AWS Inferentia and AWS Trainium workloads.

Ben Snively is an AWS Public Sector Senior Principal Specialist Options Architect. He works with authorities, non-profit, and training clients on massive knowledge, analytical, and AI/ML tasks, serving to them construct options utilizing AWS.

Ben Snively is an AWS Public Sector Senior Principal Specialist Options Architect. He works with authorities, non-profit, and training clients on massive knowledge, analytical, and AI/ML tasks, serving to them construct options utilizing AWS.